Large Language Models (LLMs) are incredibly powerful — but also unpredictable. Two identical prompts can produce different responses. Slight wording changes can drastically affect output quality. This makes one skill absolutely critical for anyone building with AI:

Prompt Engineering.

Prompt engineering is the discipline of designing inputs that guide AI models toward reliable, safe, and high-quality outputs. It’s the difference between a fun demo and a production-ready AI system.

In this blog, we’ll go beyond basic tips and explore how prompt engineering works in real-world AI systems.

Why Prompt Engineering Matters

LLMs are not deterministic programs. They are probabilistic systems trained on massive datasets. That means:

- Outputs vary with wording

- Context changes behavior

- Instructions compete with user inputs

- Ambiguity causes hallucinations

Without structured prompting:

- AI becomes unreliable

- Hallucinations increase

- UX becomes inconsistent

- Guardrails break

Prompt engineering introduces control, predictability, and structure into AI workflows.

What Is a Prompt?

A prompt is more than a question. It’s a structured instruction that defines:

- Task

- Context

- Constraints

- Output format

- Tone

A good prompt is closer to a mini program than a sentence.

Anatomy of a Production Prompt

Most real-world prompts contain multiple components.

1. System Instruction

Defines model behavior and personality.

Example

You are a senior backend architect who explains concepts clearly and concisely.

This sets tone, expertise, and response style.

2. Task Definition

What exactly should the model do?

Bad:

Explain caching

Better:

Explain distributed caching to a mid-level backend engineer in under 200 words.

Specificity improves output quality dramatically.

3. Context Injection

Provide relevant data or background.

Used heavily in RAG systems.

Context:

Redis is an in-memory data store used for caching and messaging.

More context = less hallucination.

4. Constraints

Bound the model’s behavior.

Examples:

- Word limits

- No speculation

- Use bullet points

- Avoid marketing tone

Constraints reduce variability.

5. Output Format

For production systems, formatting is critical.

Example

Return output in JSON:

{

"summary": "",

"pros": [],

"cons": []

}

Structured outputs make AI integration reliable.

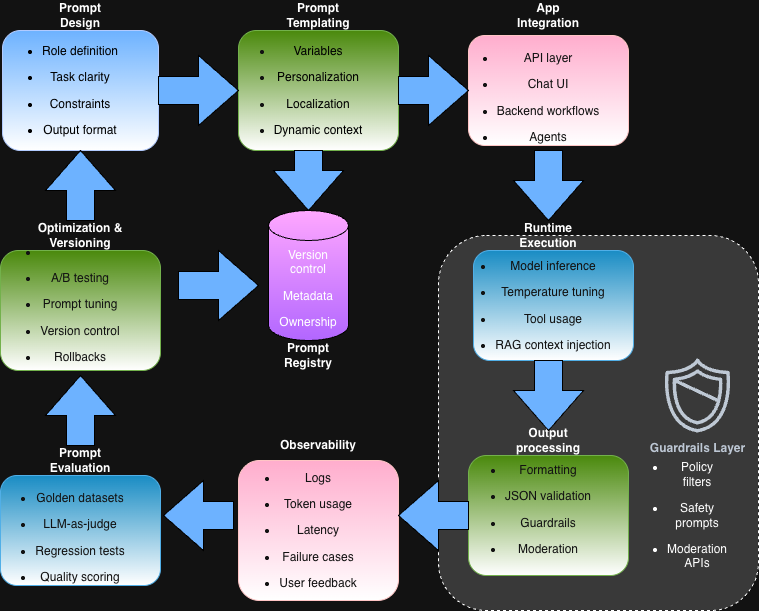

From Prompts to Prompt Templates

In real systems, prompts are rarely static.

They are templates with dynamic variables:

You are a helpful AI assistant.User question: {query}

User role: {user_type}

Tone: {tone}Answer clearly and concisely.

Templates allow:

- Personalization

- A/B testing

- Localization

- Feature experimentation

This is how modern AI products scale.

Core Prompt Engineering Techniques

Let’s explore practical techniques used in production.

1. Role Prompting

Assign a role to guide expertise and tone.

Example

You are a cloud cost optimization expert.

Why it works:

- Activates relevant training distributions

- Improves reasoning depth

- Reduces generic answers

2. Few-Shot Prompting

Provide examples of desired outputs.

Example

Classify sentiment:Input: I love this product

Output: PositiveInput: This is terrible

Output: NegativeInput: {user_input}

Output:

This teaches the model patterns without fine-tuning.

Few-shot prompting is extremely powerful for:

- Classification

- Formatting

- Style control

3. Chain-of-Thought Prompting

Encourage step-by-step reasoning.

Think step by step before answering.

This improves:

- Logical reasoning

- Math tasks

- Complex problem-solving

However, in production:

- Often hidden from users

- Used internally for quality

4. Instruction Decomposition

Break complex tasks into smaller steps.

Instead of:

Analyze this startup and give investment advice.

Use:

1. Summarize the business model

2. Identify strengths

3. Identify risks

4. Provide balanced recommendation

This reduces hallucinations and improves clarity.

5. Output Structuring

Force structured outputs for reliability.

Example

Return response in JSON only. No explanation outside JSON.

This enables:

- Automation

- API integration

- Validation layers

6. Temperature and Sampling Control

Prompting isn’t just text — parameters matter.

Key controls:

- Temperature

- Low → deterministic

- High → creative

- Top-p

- Controls diversity

Production tip:

- Use low temperature for APIs

- Higher for creative writing

Prompt Engineering for Different Use Cases

Different applications require different strategies.

1. Chatbots

Focus:

- Tone consistency

- Safety

- Context retention

Use:

- Strong system prompts

- Memory summarization

- Guardrails

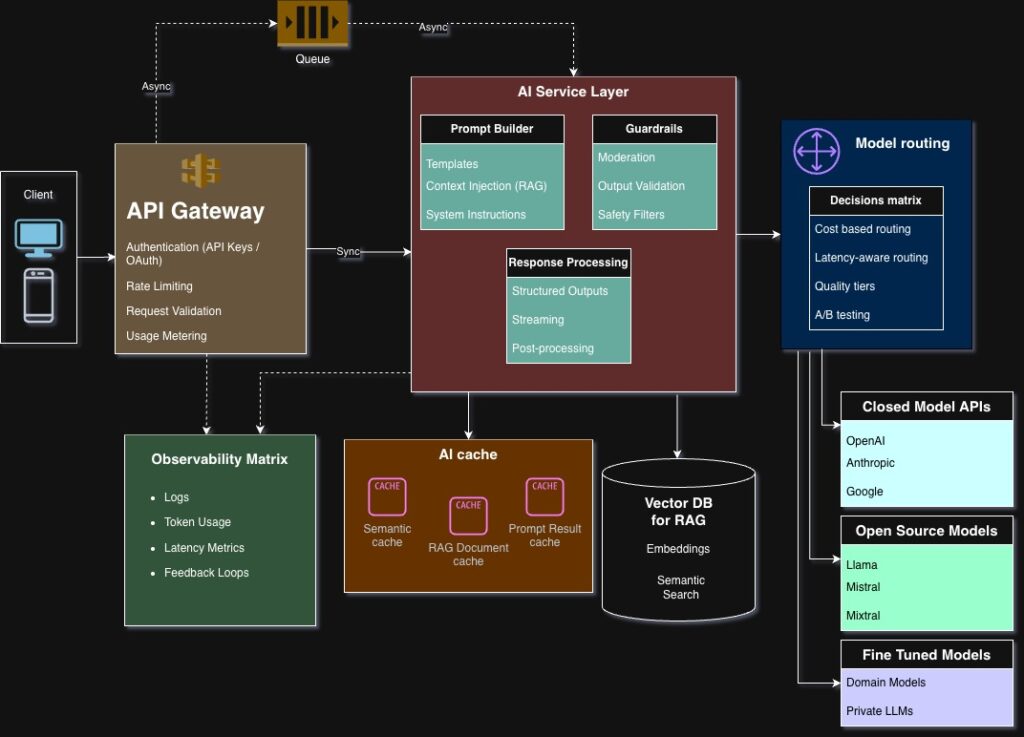

2. RAG Systems

Focus:

- Grounding in retrieved knowledge

- Reducing hallucinations

Best practices:

- Clear separation of context vs instruction

- Citations in output

- Context window optimization

3. AI Agents

Agents require:

- Tool instructions

- Decision boundaries

- Failure handling prompts

Example:

If unsure, ask clarification questions instead of guessing.

This dramatically improves agent reliability.

4. Structured Data Extraction

Key goals:

- Precision

- Deterministic outputs

Use:

- Strict schemas

- Few-shot examples

- Validation layers

Common Prompt Engineering Mistakes

Let’s look at pitfalls seen in real systems.

1. Vague Instructions

Ambiguous prompts cause hallucinations.

Bad:

Tell me about databases

2. Overloading Context

Too much irrelevant context reduces accuracy.

Prompt engineering is also about context pruning.

3. Mixing Instructions with User Input

Separating system instructions from user content is critical for safety.

This prevents prompt injection attacks.

4. Ignoring Output Validation

Even great prompts fail sometimes.

Always validate outputs in production.

Prompt Versioning and Management

As AI systems mature, prompts become assets.

Best practices:

- Store prompts in Git

- Use version tags

- Track prompt performance

- A/B test prompt variants

Some teams even build:

- Prompt registries

- Prompt analytics dashboards

This elevates prompt engineering to an engineering discipline.

Prompt Testing Strategies

Testing prompts is different from traditional testing.

You can:

- Use golden datasets

- Track regression cases

- Run batch prompt evaluations

- Score outputs with LLM-as-a-judge

This ensures prompt changes don’t silently degrade quality.

Guardrails and Safety Prompting

Prompt engineering is a core layer of AI safety.

Examples:

If the request involves illegal activity, refuse politely.

If unsure, respond with "I don't have enough information."

These soft constraints work alongside:

- Moderation models

- Policy filters

- Output validators

Real-World Example: AI Code Assistant

Let’s say you’re building an AI coding assistant.

A production prompt might include:

- Role: Senior software engineer

- Context: User’s repo structure

- Constraints: Follow project style guide

- Output: Code only, no explanation

- Safety: Avoid insecure patterns

Without structured prompting, outputs become inconsistent and unsafe.

The Future of Prompt Engineering

Prompt engineering is evolving rapidly.

Emerging trends:

- Prompt compilers

- Structured prompting languages

- Automatic prompt optimization

- Tool-aware prompting

- Retrieval-aware prompting

Eventually, prompts may look more like programs than text.

Key Takeaways

- Prompt engineering brings reliability to AI systems

- Structured prompts outperform natural prompts

- Templates and versioning are essential for scale

- Guardrails and validation are non-negotiable

- Prompt engineering is becoming a core AI engineering skill

As AI systems mature, prompt engineering will evolve from an art into a formal discipline.

What’s Next?

Now that you know how to control LLM behavior through prompts, the next step is understanding how AI systems ground themselves in real knowledge.

In the next blog, we’ll explore:

RAG Architecture — How AI Systems Use External Knowledge

This is where AI systems move from generic intelligence to domain expertise.