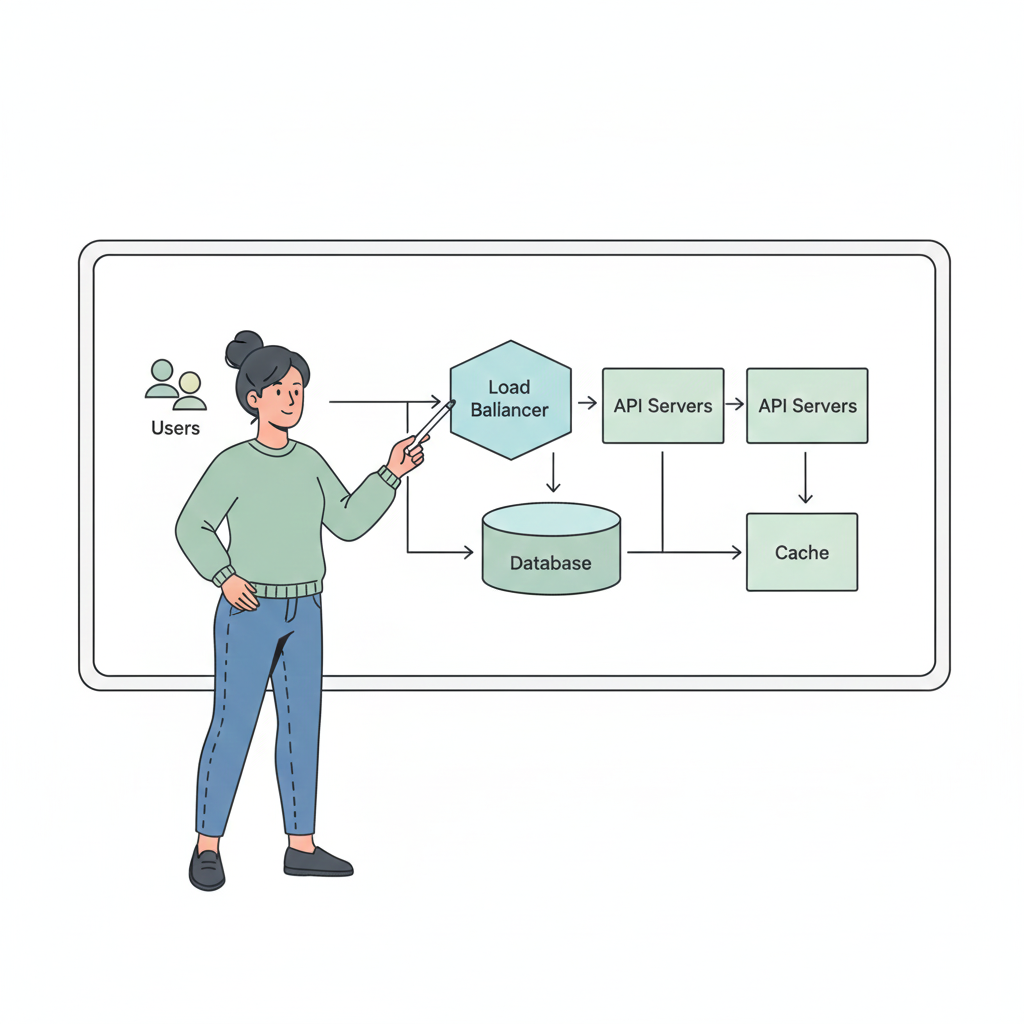

Efficient traffic management is critical for building scalable, reliable, and secure systems.

As systems grow, the number of users, requests, and services increases, making it essential to understand how traffic flows and how APIs are managed.

In this blog, we’ll cover load balancers, reverse proxies, API gateways, and rate limiting with examples to make the concepts practical and clear.

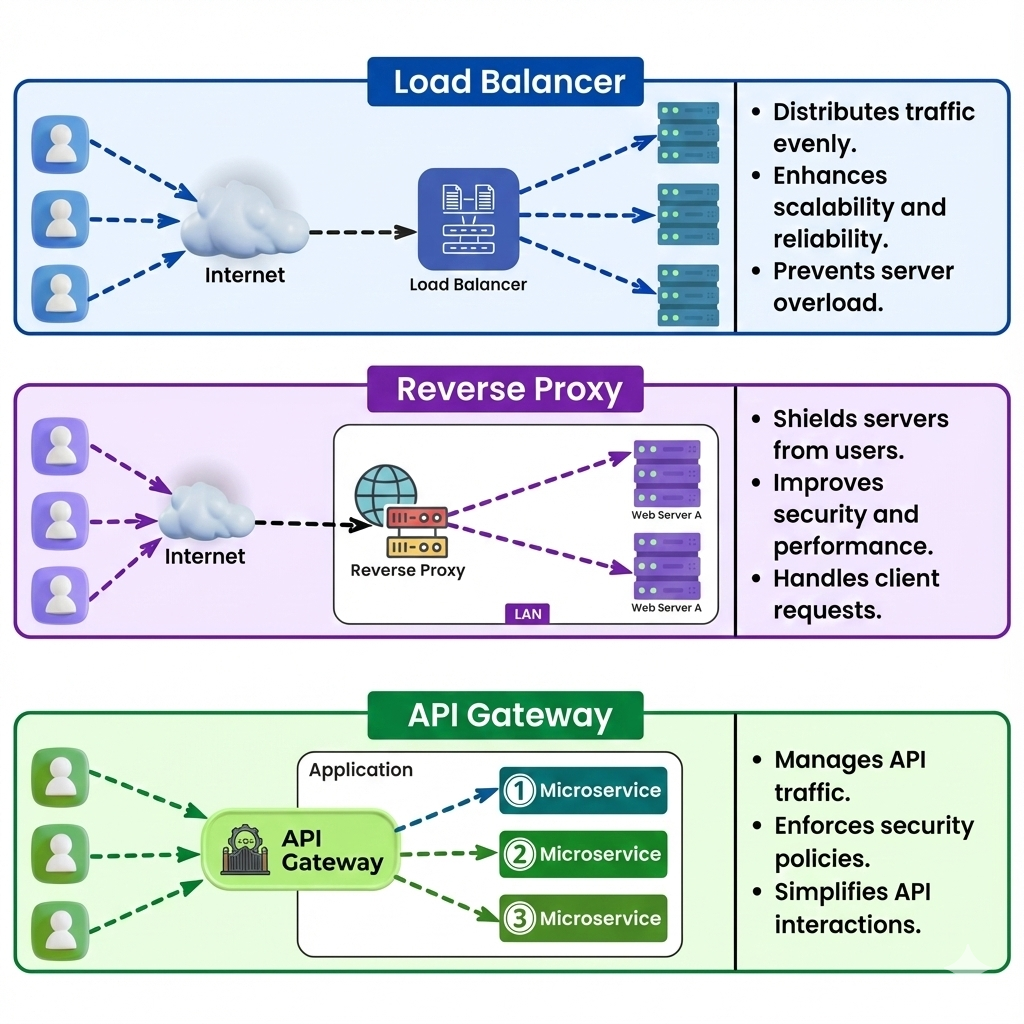

Load Balancers

A load balancer distributes incoming network or application traffic across multiple servers. The main goals are:

- Preventing any single server from being overwhelmed

- Improving reliability and uptime

- Optimizing response time

Example:

Imagine a website receiving 10,000 requests per second. Without a load balancer, a single server could crash under the load. With multiple servers behind a load balancer, traffic is distributed evenly, ensuring smooth operation.

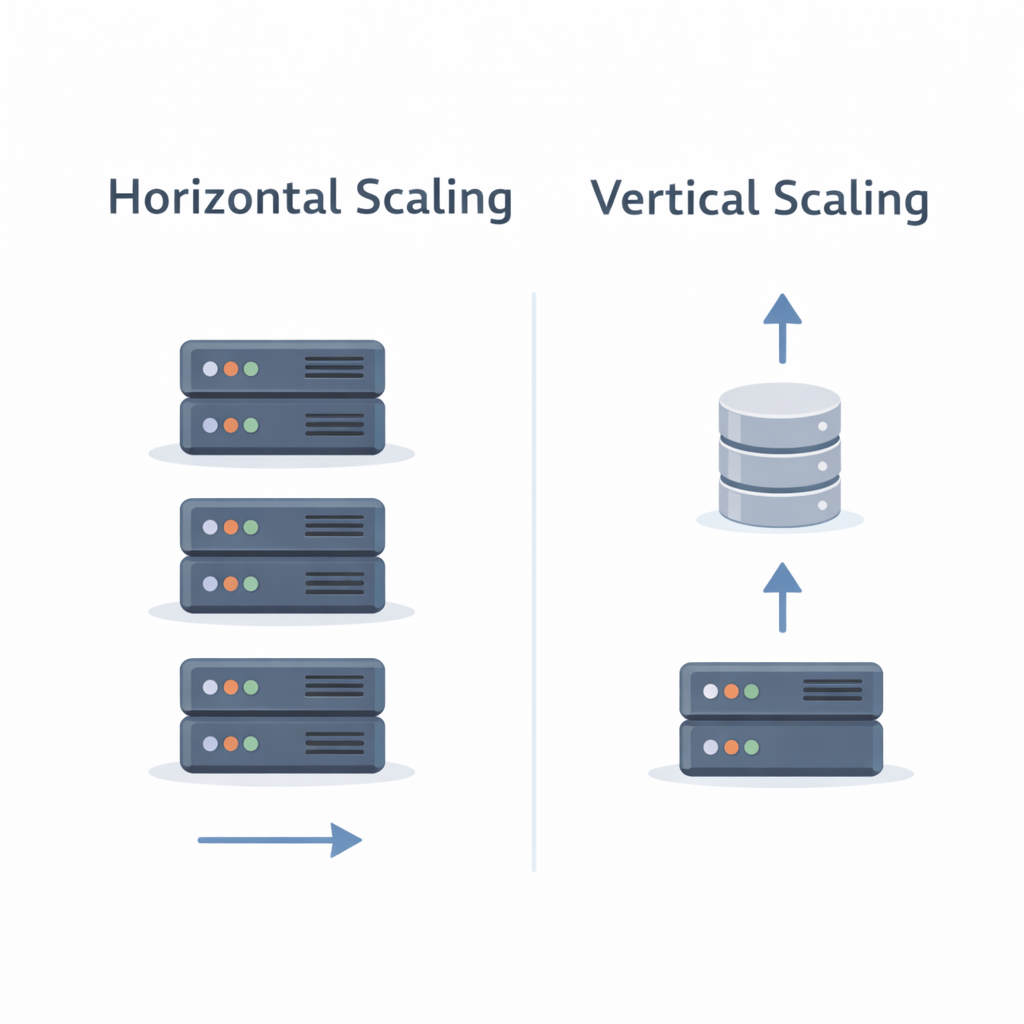

Layer 4 (L4) vs Layer 7 (L7) Load Balancing

- L4 Load Balancer: Operates at the transport layer (TCP/UDP). Routes traffic based on IP and port.

- Pros: Fast, low overhead

- Cons: Limited routing logic

- L7 Load Balancer: Operates at the application layer (HTTP/HTTPS). Can route based on URL, headers, cookies, or content.

- Pros: Advanced routing, supports microservices and path-based rules

- Cons: Slightly higher latency

Example:

For an e-commerce app, L7 load balancing can route /api/products requests to a product service and /api/orders requests to an order service.

Reverse Proxy vs Load Balancer

A reverse proxy sits between clients and servers, forwarding client requests to backend servers. It often provides:

- Caching to reduce server load

- SSL termination to handle HTTPS

- Security by hiding internal servers

Difference:

- A reverse proxy can act as a load balancer, but not all load balancers provide caching or SSL termination.

- Load balancers focus primarily on distributing traffic.

Example:

NGINX often functions as both a reverse proxy and a load balancer in modern web architectures.

API Gateway

An API Gateway is a specialized reverse proxy for microservices. It acts as a single entry point for all client requests.

Responsibilities:

- Routing requests to the correct microservice

- Aggregating responses from multiple services

- Authentication and authorization

- Rate limiting and throttling

- Monitoring, logging, and analytics

Example:

In a ride-hailing app, the API gateway routes requests like /bookRide to the booking service, /getDriver to the driver service, and /payments to the payment service. It also validates user tokens before forwarding requests.

Rate Limiting Strategies

Rate limiting prevents servers from being overwhelmed and ensures fair usage. Common strategies include:

- Fixed Window: Count requests in fixed time intervals (e.g., 100 requests per minute)

- Sliding Window: More accurate; tracks requests over a rolling time window

- Token Bucket: Allows bursts while controlling average request rate

Example:

A messaging app may allow 60 messages per user per minute using a token bucket to handle sudden bursts without crashing the server.

Key Takeaways

- Load balancers improve availability and reliability; choose L4 for simplicity, L7 for advanced routing

- Reverse proxies provide caching, SSL termination, and added security

- API gateways are essential for managing microservices, security, and routing

- Rate limiting protects servers and ensures fair client usage

Understanding traffic flow and API management is essential before diving into database or microservices design. These concepts form the backbone of scalable systems.

What’s Next?

In the next blog, we’ll cover:

👉 Authentication, Authorization & Stateless Design

You’ll learn how to manage access, differentiate authentication vs authorization, and understand stateless vs stateful services.